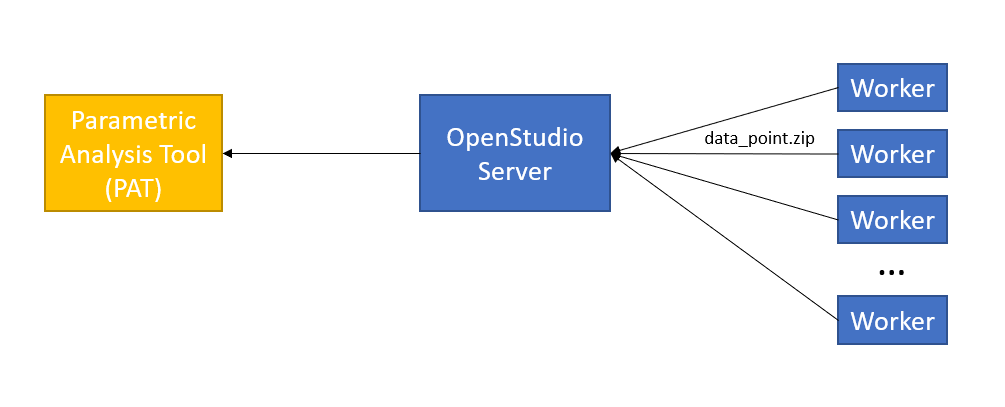

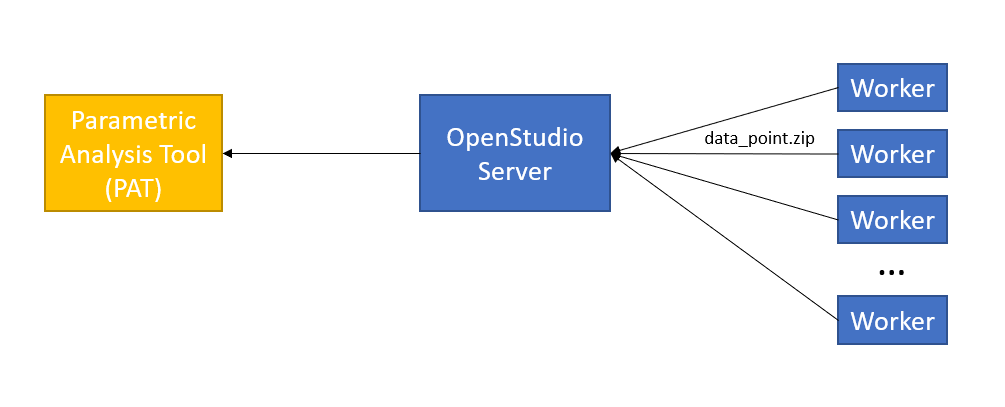

data_point.zip supports the architecture of OpenStudio-Server, which is what PAT uses when running simulations both locally and on the cloud.

When you run OpenStudio-Server, you are actually running one "server" and one or more "workers." These may all physically be on one computer (like when you run PAT on your laptop) or split across many computers (like when you run on the cloud). The "server" has the brains and is what you interface with, usually via PAT. The "workers" simply accept jobs, run them, and send back the results, which live inside data_point.zip. See diagram below:

When you are running on the cloud, this is obviously necessary: you clearly need to be able to get the results from the computer(s) running the workers to the computer running the server to your computer running PAT. When all of these processes are located on your local computer it seems redundant.

Unfortunately this is a foundational aspect of the design of OpenStudio-Server, so it can't be disabled. If you don't need all of the simulation results, you can use the approach suggested in a comment: delete the unnecessary files using a Reporting Measure (which is run before the data_point.zip is created). If you do need those simulation results, you are kind of out of luck.

@Determinant while not a direct solution to having data_point.zip not made, one option to make it smaller is a reporting measure that throw away many of the files from the run directory prior to the zip being made. If you are are interested in that I can add an answer with the relevant ruby code. I don't think we currently have that measure published anywhere. We have used it on large analyses with lots of time series results.

Thanks @David Goldwasser, yes, please post that. Maybe it can help out. Hi again @David Goldwasser, just pinging to see if that Reporting Measure can still be made available. Thanks

@Determinant here is example code to put in reporting measure to delete simulation files. You can repeat this code for any type of file you want to delete. Just make sure this is the last reporting measure in the workflow so you are not deleting files that other reporting measures might need.

Thanks @David Goldwasser, I'll work on converting this to a Reporting Measure.