One option you can consider for cloud runs is OpenStudio Server. It's a docker instance that includes OpenStudio, EnergyPlus, and Radiance. OpenStudio is a energy modeling platform that uses EnergyPlus and Radiance as simulation engines. While the primary use case for OpenStudio Server is running OpenStudio Analyses, you can setup a workflow that loads in external IDF files, bypassing the OpenStudio model format (OSM). I can point you to an example if you are interested in that.

The OpenStudio Parametric Analysis Tool algorithmic mode uses OpenStudio Server deployed on Amazon EC2, but it can also be deployed on other cloud services or on an internal organization network.

Updated Answer Below:

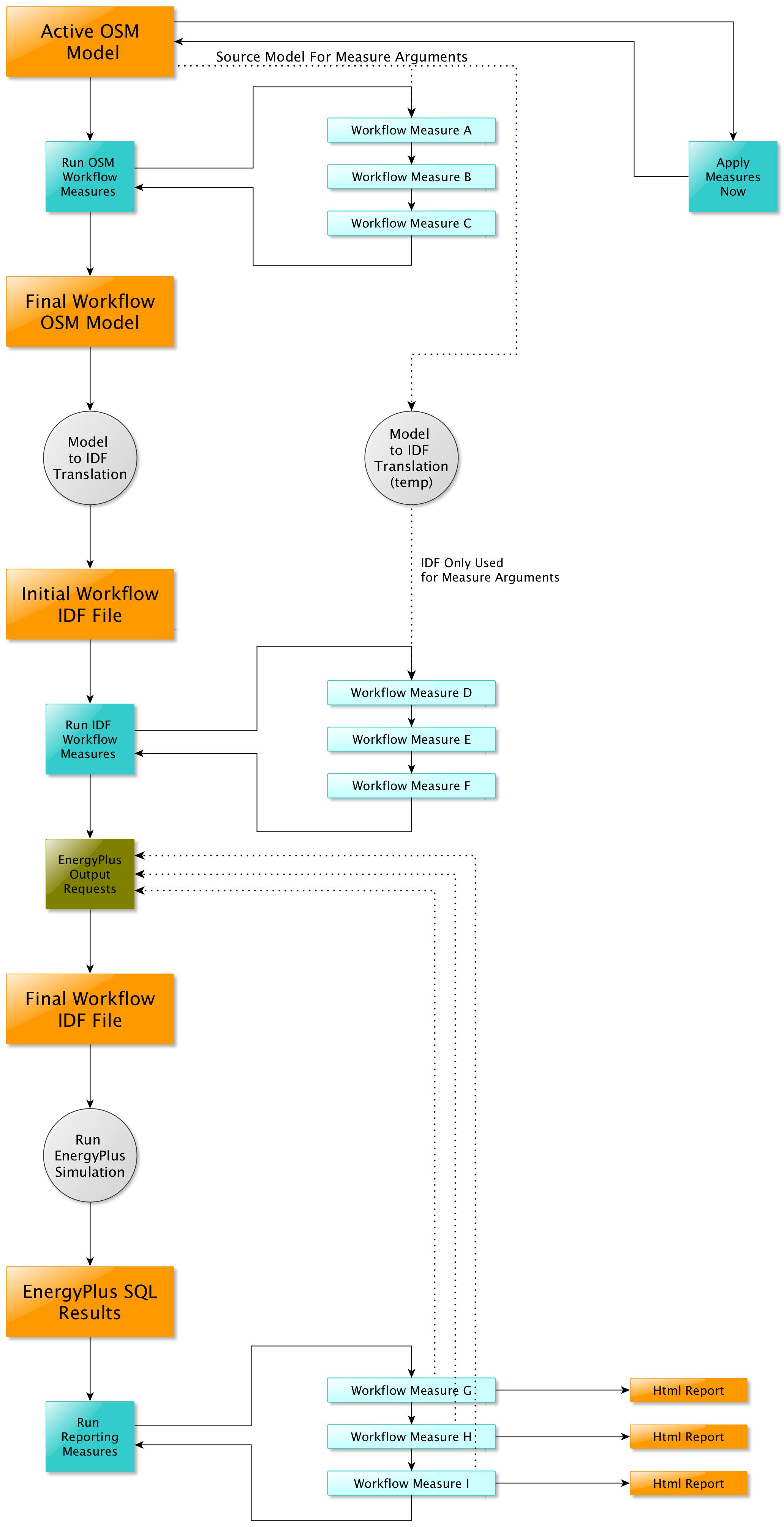

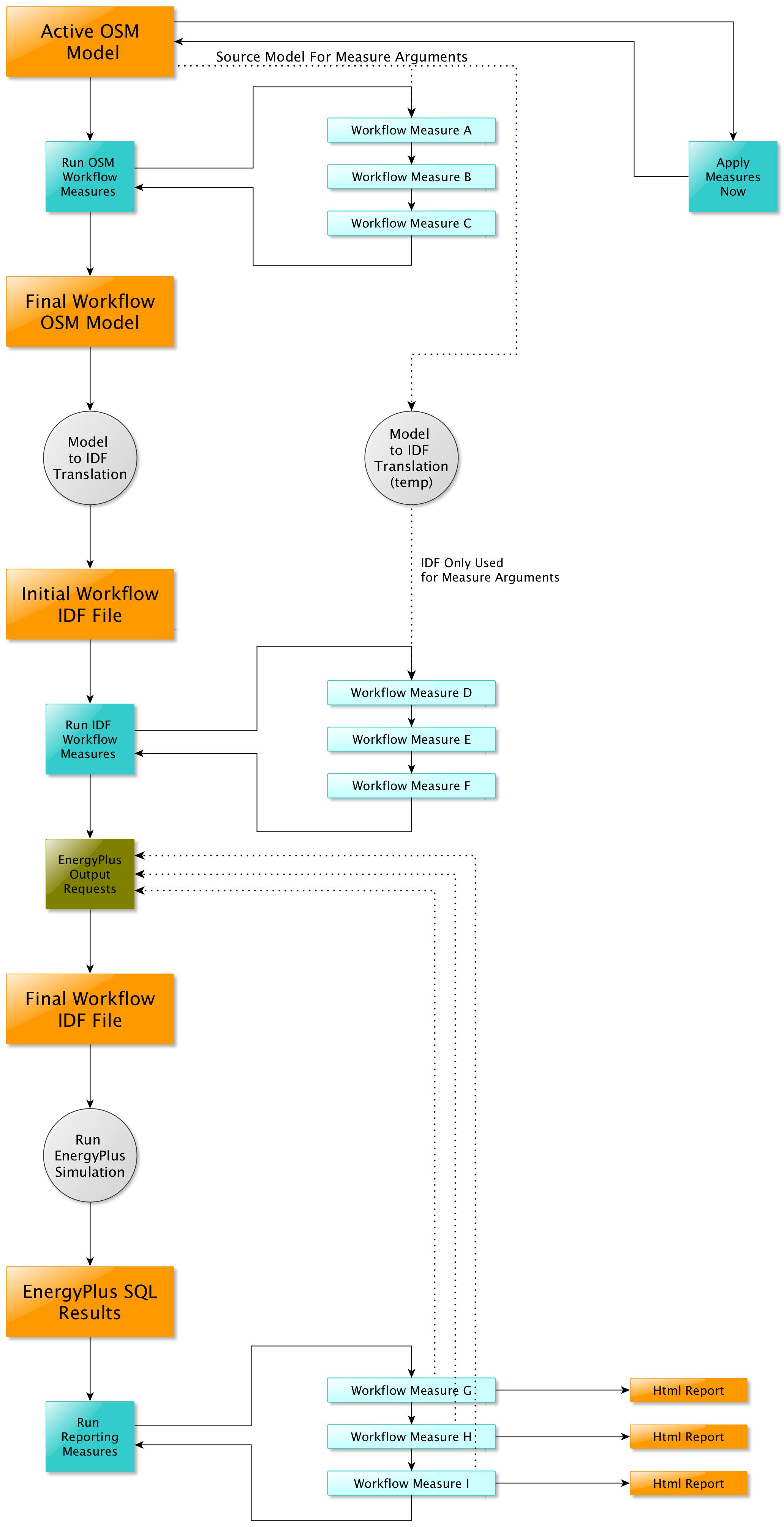

If you use OpenStudio Server, mentioned above, it can handle algorithmic sampling (using R), run management, and results visualization. If however you want to handle the parametric analysis elsewhere, you can also just run individual datapoints with the OpenStudio CLI. An OpenStudio workflow (OSW), which is run by the CLI, describes the application of a series of measures to a seed OSM then to an IDF, and lastly post simulation measures generate reports. In our BESTEST-GSR GitHub repository we have a sample "Bring your own IDF" OSW intended as a pattern for users to follow who want to work with the OpenStudio framework in some way but don't want to directly work with the OpenStudio Model (OSM) format. This example workflow still has a seed OSM file but the contents of that model is replaced by an EnergyPlus measure that loads in a user specified IDF file.

For reference, if you do decide to work with the OSM format and the OpenStudio Model API, then you are still in the end producing IDF files, but using OpenStudio API as a modeling tool. You can decide with your client what is best for your use case.

In the "Bring your own IDF" example above, the external IDF file is brought in where measures D-F are shown in the diagram below