internal server error after successful runs

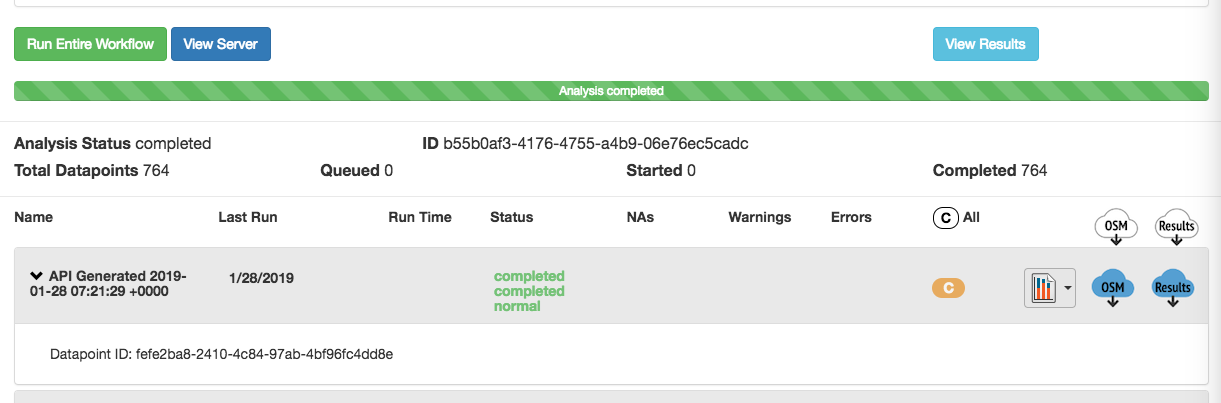

I have an OpenStudio Parametric Analysis Tool run that appears to run each measure combination successfully. However, when I hit "View Analysis" so I can download the results.csv file, I get the following error: "Executor error during find command: OperationFailed: Sort operation used more than the maximum 33554432 bytes of RAM. Add an index, or specify a smaller limit. (96)" Any clues?

Alternatively--is there a better way to match the very long string of characters identifying a run in the local results folder with the design alternative I've assigned? I've been pulling the long string of characters/design alternative pairing off the results.csv file.

Thanks!